How an AI Agent Turns Forty Minutes of Fraud Investigation into a Decision-Ready Evidence Package

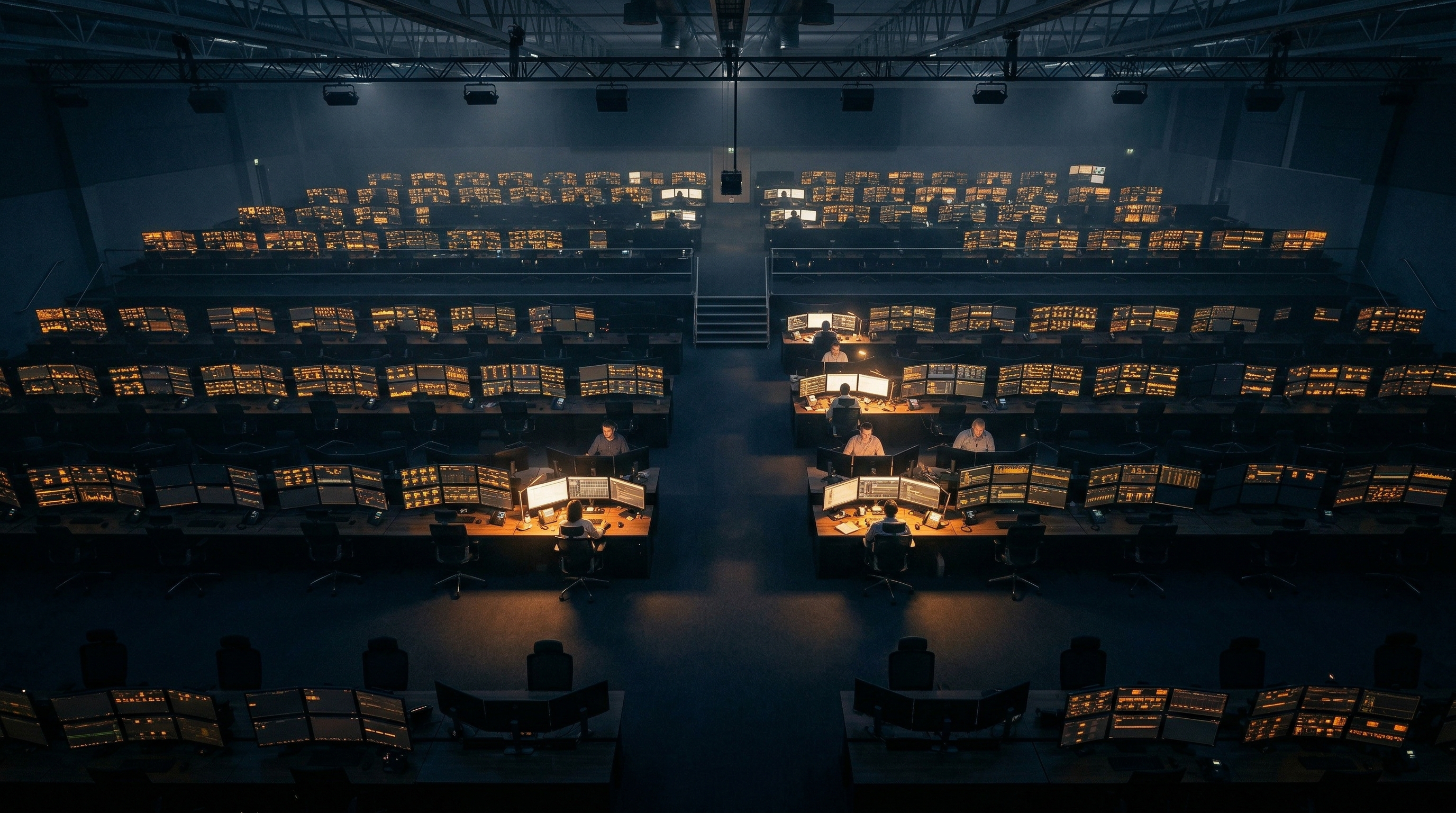

For fraud analysts spending most of their day assembling evidence for cases that turn out to be nothing.

Five Alerts Before Coffee, and Four of Them Are Nothing

It's Monday morning at a 200-person regional community bank. The fraud analyst opens the case management queue and finds five transactions flagged overnight. A $4,999 wire to a first-time vendor with a generic business name. Two rapid-fire retail purchases under $500 each from the same account in Chicago, 48 minutes apart. A $12,750 wire to an international trading entity from an account that just changed its profile. A $2,100 electronics purchase from an account that's been dormant for six months.

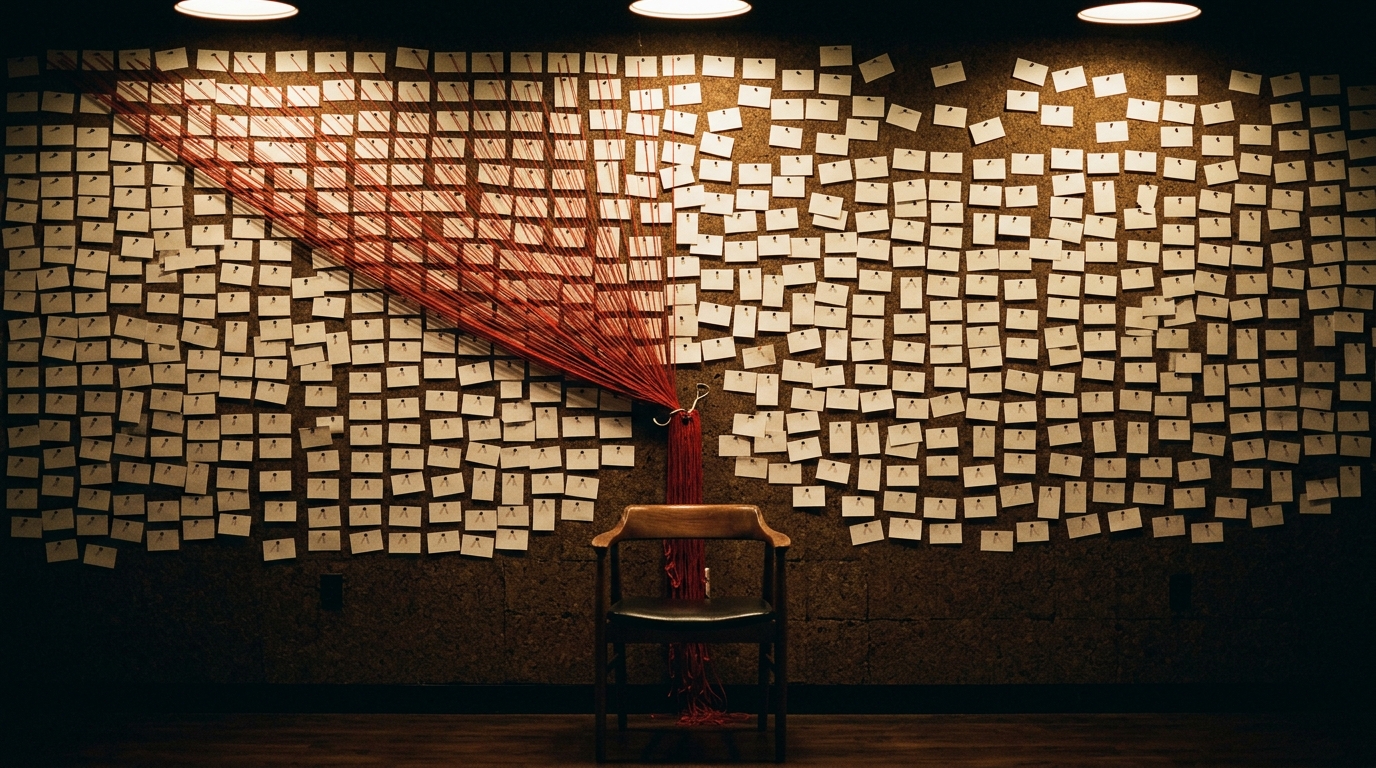

Each case needs the same work. Pull the account's transaction history. Calculate how many transactions came from that account in the last hour, the last day, the last week. Cross-reference the transaction against six known fraud patterns, including structuring, threshold testing, account takeover, and dormant reactivation. Write up an evidence summary with a risk assessment and a recommended action. Package everything into a format the compliance director can act on before the 48-hour initial review window closes.

One case takes 30 to 45 minutes. Five cases, four hours minimum. And this is a light morning. Some days it's twelve alerts. Some days it's forty-seven. The fraud analyst already knows, before opening a single case file, that four of those five are probably legitimate transactions that happened to trip a rule. But "probably" isn't good enough when missing 1% of genuine fraud at scale translates to millions in losses. So every case gets the full treatment.

The overtime isn't the worst part. The worst part is that the one case that actually matters, the $12,750 wire that has all the hallmarks of a business email compromise, is sitting at the bottom of the queue aging toward the 30-day SAR filing deadline while the analyst builds evidence packages for transactions that will be cleared by lunch.

This is the daily arithmetic of fraud operations at any financial institution with an alert volume that outgrew its investigation capacity. And that's most of them. Nearly 7 in 10 financial institutions increased fraud-detection spending last year (PYMNTS Intelligence / Block, 2025). The detection side is well-funded. The investigation side, where alerts become evidence packages, remains almost entirely manual.

Why Better Detection Makes the Investigation Bottleneck Worse

The instinct is to fix the detection engine. Tune the rules. Reduce the false positives. But here's what actually happens: a tighter detection model catches different patterns, which generates different alerts, which still need investigation. The volume shifts, but the per-case workload doesn't.

Alert enrichment in fraud detection is the process of taking a flagged transaction and assembling all the contextual evidence, including account history, velocity metrics, pattern matches, and risk assessment, that an analyst needs to make a disposition decision. Rule-based transaction monitoring systems maintain false positive rates of 95-98%, meaning fraud analysts spend nearly all their investigation time on alerts that turn out to be legitimate transactions (FluxForce, 2025). At $500 to $1,500 per investigation, a mid-market bank processing 50,000 alerts annually spends $8 million to $12 million on false positive investigation alone.

The structural problem is that enrichment requires both volume processing and judgment in the same step. You need to calculate velocity across three time windows (transactions in the last hour, the last day, the last week), match against a library of known patterns, and then synthesize an evidence narrative that explains why this particular transaction, at this amount, to this vendor, from this account, at this time, does or doesn't look like fraud. A rule engine handles the velocity math. A person handles the synthesis. Neither one alone covers the full step.

Case management platforms like Actimize or FICO Falcon are excellent at generating alerts and routing them into queues, but the investigation step itself, where an analyst opens a case, pulls history, cross-references patterns, and writes the evidence package, remains manual. The platforms manage the queue. They don't do the work inside each case.

The same enrichment bottleneck exists wherever analysts receive flagged events and must manually assemble evidence packages. A two-person fraud operations team at a Series B payments fintech faces the identical problem at different scale: their real-time detection engine flags three times more transactions than the team can review in a shift. The vocabulary is different (they call them "events" instead of "cases," and the queue is a Slack channel instead of a dashboard), but the analyst still pulls history, calculates velocity, matches patterns, and writes up a summary. And the math still doesn't work. Alert volumes grow 30-40% annually (KPMG, 2025). Staffing budgets don't.

Adding headcount is the standard response. But experienced fraud analysts are scarce, cost $80,000 to $120,000 fully loaded, and take months to train. When they leave, which happens frequently in roles dominated by repetitive triage work, their pattern recognition expertise and institutional knowledge leave with them. The replacement gets the same overwhelming queue on day one.

Reducing detection sensitivity is the other lever. Some teams tune down their anomaly detectors to cut the queue, which lets real fraud through. That's trading a workload problem for a loss problem.

The bottleneck in fraud operations isn't detection. It's the 30 to 45 minutes between "alert flagged" and "evidence package on the analyst's desk," repeated thousands of times a year for cases that are overwhelmingly false positives.

This is the problem lasa.ai solves for fraud operations teams: automated alert enrichment that builds decision-ready evidence packages for every flagged transaction, so analysts review findings instead of assembling them.

See what this looks like for your alert queue →

What Changes When the Evidence Package Is Already Built

The shift isn't faster detection. It's what happens after detection. Instead of a fraud analyst opening a case and spending 40 minutes pulling history, calculating velocity, and writing up evidence, an AI agent handles the entire enrichment step from the moment a transaction is flagged. Not a template. Not a rule builder that routes alerts into a different queue. An agent that receives the flagged transaction, pulls the account's full history, calculates velocity across configurable time windows, matches the transaction against a library of known fraud pattern signatures, and produces a structured evidence package with a risk assessment and recommended action.

The agent delivers complete outcomes, but follows a defined, auditable process under the hood. The velocity calculations are deterministic. The pattern matching runs against a curated library. The evidence synthesis uses AI to produce the narrative that ties data points into a coherent assessment. Agent-level outcomes with workflow-level reliability. Every step is traceable, every decision path is documented, and the pattern library is yours to configure.

The fraud analyst's job changes from assembling evidence to reviewing it. They open a case and the package is already there.

From Flagged Transaction to Decision-Ready Package in Four Steps

Here's what actually happens when a transaction gets flagged.

The agent receives five flagged transactions from the overnight detection run. Each one arrives with a transaction identifier, dollar amount, vendor name, merchant category, location, timestamp, risk score, and the reason it was flagged. A $4,999 payment to a first-time business services vendor in New York, risk score 0.91, flagged for a round amount near the reporting threshold. Two retail purchases under $500 in Chicago from the same account within 48 minutes, each scored at 0.88, flagged for velocity spikes and a structuring pattern. A $12,750 wire to an international import vendor in Miami, risk score 0.93, flagged for a large first-time international transfer following an account profile change. A $2,100 electronics purchase in San Francisco from a dormant account, risk score 0.86, flagged for reactivation.

First, the agent pulls each account's transaction history and calculates velocity. For the Chicago account, the history shows seven prior transactions in the same session: a $445 purchase, a $498.75, a $475.20, a $460, a $488.30, a $499.99, a $470.50, all at different retail locations within 45 minutes. That velocity count is the first signal in the evidence package.

Second, the agent matches each transaction against six known fraud patterns: structuring (rapid sub-threshold transactions), new-vendor large-amount transfers, geographic anomalies, dormant account reactivation, shell company indicators, and threshold testing. The Chicago transactions match both the structuring pattern and the threshold testing pattern. The $4,999 wire matches threshold testing and shell company indicators. The Miami wire matches the new-vendor and account-takeover patterns.

Third, for each flagged transaction, the agent synthesizes an evidence narrative. Not a list of matched rules, but a readable summary: "Exhibits high-confidence indicators of threshold testing. The amount is exactly $1 below the $5,000 regulatory review trigger. The use of a generic business name suggests a shell company setup. Given the low account velocity, this high-value payment is a severe outlier." The narrative includes a risk assessment (critical, high, medium, or low) and a specific recommended action (freeze the account, require supporting documentation, initiate manual verification).

Fourth, the agent compiles everything into a batch report. The report opens with an executive summary covering the batch: five transactions reviewed, two critical-risk, three high-risk, total value at risk of $20,828.50. Each individual case gets a detail section with the amount, vendor, location, risk score, matched patterns, velocity count, evidence summary, and recommended next step. The report closes with prioritized recommendations: immediate account freezes for the two critical cases, outbound verification calls for the Chicago structuring pattern, enhanced know-your-business review on the first-time vendor, and a profile change audit for the Miami wire.

Any transaction scoring above the 0.85 risk threshold triggers an immediate email notification to the fraud operations team. The analyst doesn't wait for the batch report. The critical cases arrive in real time.

For an SIU investigator at a regional health plan, the same pattern applies with different vocabulary. The flagged events are claims instead of transactions. The pattern library covers upcoding, unbundling, and phantom billing instead of structuring and account takeover. The velocity metrics track claim submission cadence by provider instead of transaction frequency by account. But the evidence package, with its risk assessment, matched patterns, and recommended action, looks the same.

What the Fraud Analyst's Week Looks Like When the Agent Runs Overnight

Before the agent, Monday morning meant four hours of evidence assembly before the analyst could do any real investigative work. Five cases, four cleared as false positives, one actual case that got attention only after the others were processed.

After the agent, Monday morning means opening a batch report that's already organized by severity. The two critical cases are at the top, each with a complete evidence narrative, velocity data, matched patterns, and a specific recommended action. The three high-risk cases are below, also fully packaged. The analyst reads instead of assembles. The critical cases get immediate attention because the evidence is already there. The false positives get a quick review and disposition instead of a full investigation.

The math changes. A batch that took four hours of manual work takes under an hour of analyst review. Across a year of 50,000 alerts at a mid-market bank, the reduction in investigation time translates to measurable savings. Institutions implementing automated enrichment workflows report average fraud losses reduced by 42.7% in the first year (Alloy / FinanceAlliance, 2025). Not because the detection is better. Because the real fraud cases get investigated while they're still actionable instead of sitting in a queue behind thousands of false positives.

The fraud analyst's expertise finally goes where it belongs: the ambiguous cases, the emerging patterns, the investigations that require human judgment and institutional knowledge. The repetitive enrichment work, the part that burns analysts out and drives the turnover that costs 1.5 to 2 times their salary to replace, gets handled before they sit down.

Whether you manage five fraud analysts covering a regional bank, a two-person team at a payments fintech processing alerts faster than they can review them, or six analysts across two time zones at a credit union watching SLA compliance degrade as volumes grow 35% quarter over quarter, the morning changes the same way. The queue is still there. But the evidence packages are already built.

Teams that automate fraud alert enrichment often extend the pattern next to their transaction anomaly detection pipeline, tightening the loop between flagging and investigation so that both sides run without manual intervention.

This is one pattern among many. The same alert enrichment approach works wherever analysts receive flagged events and must assemble evidence packages before acting: fraud operations at banks and fintechs, SIU investigation at health plans, SOC alert triage at mid-market enterprises, trust and safety review at online marketplaces.

If your team processes flagged events and assembles evidence packages before acting:

See what it looks like for your operations →Frequently Asked Questions

What is the false positive rate for bank transaction monitoring?

How much does it cost to investigate a single fraud alert?

How can AI reduce fraud investigation time?

What is the difference between fraud detection and fraud investigation?

How do you automate the evidence-gathering part of fraud review?

See What This Looks Like for Your Operations

Let's discuss how LasaAI can automate fraud alert enrichment for your team.