Contract Redline Review Takes Three Hours — Here Is How to Get It Done in Fifteen Minutes

How legal counsel at mid-size companies turn contract revision analysis from an afternoon of side-by-side comparison into a same-day, policy-scored summary report.

The revised agreement arrives at 2 PM. Thirty-two pages. The counterparty's counsel made changes in at least five sections — limitation of liability, indemnification, termination, payment terms, and something in the IP clause that was not there before. Some changes are tracked. Some are not.

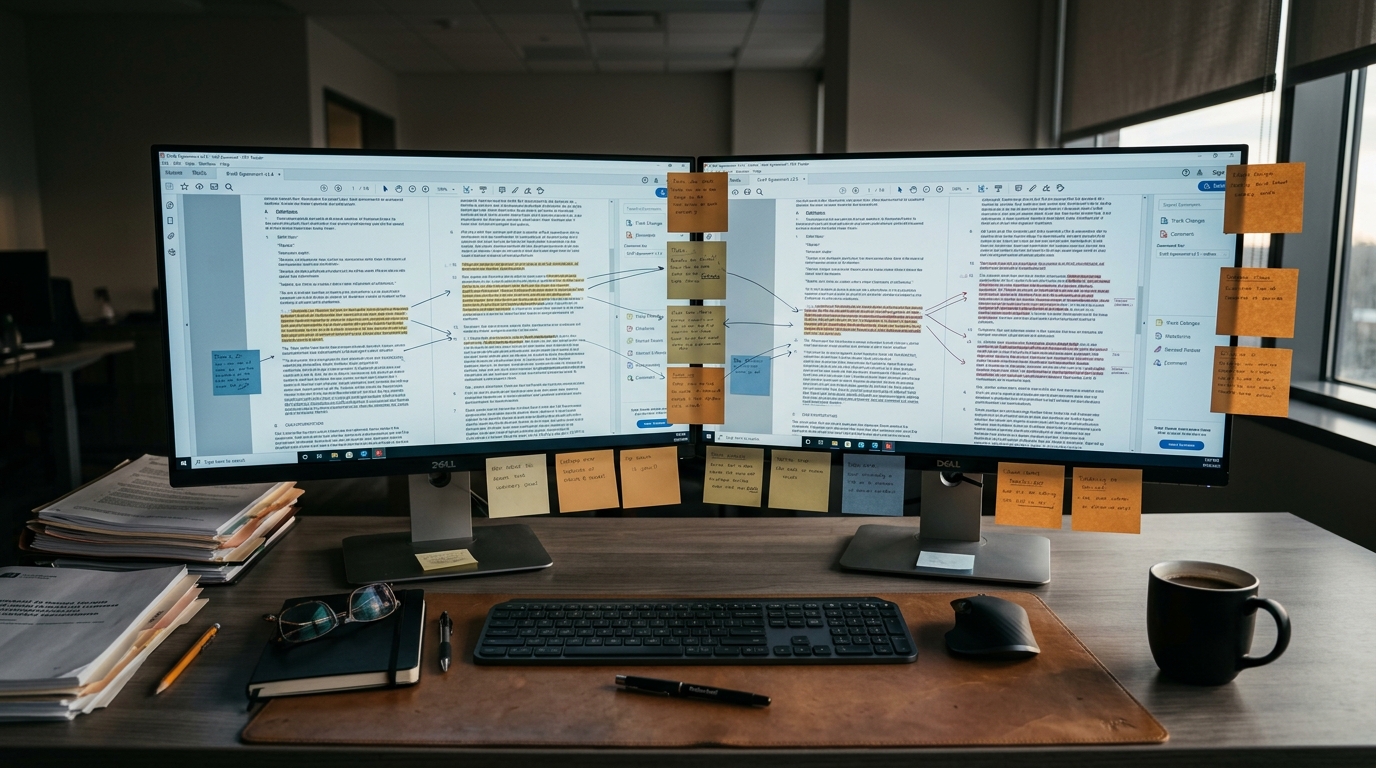

You open both documents side by side and start reading. Section 1. Section 2. You are looking for what moved, what was added, what was quietly deleted. Each change needs context: is this a formatting tweak you can accept, or did they just remove the cap on consequential damages?

For a single licensing agreement with five material changes and a dozen formatting edits, the comparison absorbs two to three hours of focused attention. Not because any individual change is hard to find, but because the analysis requires reading both versions in full, matching each deviation against your negotiation playbook, assessing the impact, and writing it all up. The executive summary for leadership. The material changes table with impact levels. The section-by-section breakdown with original versus revised language. The action items with escalation notes. The recommended response strategy for tomorrow's call.

That is one contract. At a company running five to eight negotiations simultaneously, the revision queue backs up by Wednesday.

This is the part of contract negotiation that nobody puts on the job description. Not the strategy. Not the creative problem-solving. The reading. The comparing. The classifying. Legal counsel at companies with 50 to 500 employees spend a disproportionate share of their week on this work. 92 minutes per contract review, according to industry benchmarks. And 65% of legal practitioners say wasting time on administrative work is their primary complaint.

The hours add up. But the hours are not the worst part.

What Gets Missed When Volume Overwhelms Attention

The real risk in manual revision review is not slowness. It is the change you did not catch.

Contract redline review is the process of comparing a counterparty's revised agreement against your original draft, identifying every change, and classifying each as acceptable, negotiable, or a deal-breaker based on your company's negotiation standards. It sounds straightforward on paper — and it is, when you are reviewing one contract on a quiet Tuesday. The difficulty scales with volume, complexity, and the counterparty's willingness to track their changes honestly.

Manual contract review misses 20-30% of critical clause modifications, particularly in fast-moving negotiations (Spellbook, 2025). That is not a typo. One in four material changes slips through when a lawyer is scanning a dense agreement under time pressure. A liability cap quietly removed. An indemnification scope expanded by three words. A termination for convenience clause inserted in a subsection nobody expected.

Hidden redlines — changes made without tracked markup — are a known risk in contract negotiation. One documented case involved a vendor adding a non-cancelable clause without visible markups, materially changing the client's cancellation rights. The cost of discovering that kind of change after execution is not measured in hours. It is measured in litigation.

The consistency problem compounds the risk. Two lawyers on the same team will classify the same type of liability shift differently. One sees a payment term extension from Net-30 to Net-60 as a negotiation point. The other accepts it as standard. Without a shared, applied standard for every review, the company's risk posture depends on which lawyer happened to be available that afternoon.

And the output itself varies. One lawyer writes a two-page narrative memo. Another produces a bullet-point email. A third builds a spreadsheet with color-coded cells. The deal team and leadership never get the same deliverable twice, which means they never develop the muscle memory for how to read a revision summary quickly and act on it. The format problem sounds small until the CEO asks why the termination clause was not flagged in last quarter's vendor agreement, and the answer is that a different lawyer reviewed it and their memo did not include an explicit recommendation column.

The same structural problem shows up outside legal departments entirely. A contracts manager at a 150-person construction firm faces a version of this every time a subcontractor submits revised shop drawings. The manager compares the new package against the original specification, section by section, checking dimensional tolerances and equipment ratings against project requirements. 35% of construction submittals get rejected on first review, often because the comparison was rushed and a non-compliant rating slipped through. Different documents, same pattern: two versions of a structured document, a standards framework, and a human trying to catch every deviation without missing one.

The gap is not between reading and not reading. It is between reading everything once under pressure and applying the same standard every time.

This is the problem lasa.ai solves for legal teams reviewing counterparty contract revisions — a structured, policy-scored revision summary delivered the same day, every time.

See what this looks like for your contracts →

Why Spreadsheets, Chatbots, and Track Changes All Hit the Same Ceiling

The natural first move is Word's track changes feature. It works when both sides use it faithfully and the negotiation stays in a single document. It breaks down when the counterparty sends a clean copy without markup, when you are comparing across formats, or when you need to classify changes against your negotiation policy rather than just identify them. Track changes shows you what moved. It does not tell you whether the movement matters.

The next attempt is usually copying both documents into a general-purpose chat tool. The summary you get back is loose — a paragraph describing what changed, without the structured classification your deal team needs. It does not know your negotiation playbook says to reject any proposal for unlimited liability. It does not flag that the payment term change from Net-30 to Net-90 triggers your material economic threshold. And the output looks different every time you run it, which means you cannot build a process around it.

Spreadsheet trackers and basic automation connectors sit one level above that. They can log contracts, track deadlines, send reminders. They cannot read a revised indemnification clause and determine that the scope expanded beyond your standard coverage. The judgment step — match this specific change against this specific policy criterion and recommend an action — is exactly where these tools stop.

Hiring another contract attorney solves the capacity problem temporarily. A mid-level attorney costs $120K to $180K fully loaded. But inconsistency between reviewers actually gets worse with more people, not better. And the next volume spike puts you right back where you started.

Each alternative addresses a piece of the problem. None of them address the core challenge: classifying every change in a revised agreement against a specific negotiation policy and producing a structured, actionable summary report that the deal team can use immediately.

What Happens When the Revision Summary Writes Itself

The shift is not about reading faster. It is about applying the company's negotiation standards to every change, every time, and delivering a structured report instead of a lawyer's handwritten notes.

The original draft, the counterparty's revised version, and the negotiation policy go in. The agent reads both documents, identifies every change — tracked or untracked — and classifies each against the policy framework. The output is a structured revision summary report ready for the legal team and leadership.

This is the difference between an AI agent and a chatbot. A chatbot gives you a paragraph. An agent follows a defined, auditable process: compare documents, extract changes, classify against policy criteria, assess impact, format into a structured deliverable. Agent-level outcomes with workflow-level reliability. The same process runs the same way every time, with configurable policy thresholds and escalation rules.

The report opens with an executive summary — three to five bullet points covering the most critical changes and the overall risk posture. A material changes table follows, with columns for section, change description, impact level (critical, high, moderate, low), recommendation (accept, negotiate, reject), and reasoning tied back to the specific policy criterion that triggered the classification.

For a 200-person enterprise software company reviewing counterparty revisions to a licensing agreement, the material changes table might show: IP clause changes flagged as critical with a reject recommendation because the policy explicitly prohibits IP assignment to the counterparty. A liability cap removal rated high with a reject recommendation because the policy rejects proposals for unlimited liability. A payment term extension from Net-30 to Net-90 rated moderate with a negotiate recommendation because the policy allows negotiation on standard payment terms but triggers an escalation review for material economic impact.

Below the summary, each section with changes gets a detailed breakdown — original language versus revised language, impact assessment, and recommendation. The report closes with numbered action items ordered by urgency (draft rejection language for the IP clause, evaluate the payment term compromise, log counter-offers with justification) and a recommended response strategy covering leverage points and risk mitigation for the next round.

During due diligence on an acquisition, a corporate development associate at a mid-market private equity firm faces the same challenge with disclosure schedules and purchase agreements. The target's counsel uploads revisions overnight, and the associate needs a materiality assessment before the morning call. The data shape adapts — representations and warranties replace contract clauses, deal thresholds replace negotiation policy criteria — but the structure of the report is the same: change identification, classification, impact, recommendation, action items.

What Tuesday Looks Like When the Revision Queue Clears by Lunch

The counterparty sends back the revised agreement at 2 PM. By 2:15, the revision summary report is in your inbox. Executive summary, material changes table, section-by-section analysis, action items, recommended response. Every classification scored against the same negotiation playbook. Every escalation trigger applied consistently.

You spend fifteen minutes reading the summary, not three hours writing it. The IP assignment clause is flagged as reject — you already knew that would be the call, but now you have the policy reasoning documented. The payment term extension is flagged as negotiate, and the escalation protocol note reminds you that changes exceeding the 5% material economic threshold require VP of Legal sign-off. The formatting corrections in section 4.2 are auto-accepted. The action items are already ordered by urgency.

The deal team has what they need for tomorrow's call. The documentation is clean enough for the contract management system. And you are working on the negotiation strategy instead of still reading section 8.

There is a second-order benefit that takes a few weeks to notice. Because the report structure is identical every time, the deal team starts reading it faster. The VP of Legal knows exactly where to look for the escalation triggers. The business lead skims the executive summary and jumps to the action items. The contract management system gets consistent entries. What used to be a bottleneck in the negotiation cycle becomes a checkpoint that takes fifteen minutes instead of half a day.

Whether you are reviewing counterparty revisions to enterprise licensing agreements, comparing subcontractor submittals against project specifications, or assessing disclosure schedule updates during an acquisition, the morning changes the same way. The revision analysis is done. The structured report is ready. Your judgment goes where it actually matters — the negotiation, not the comparison.

Teams that automate contract clause analysis with agents like the contract clause analyzer often find that revision summarization is the natural next step — once you have standardized how you flag risky clauses, standardizing how you review counterparty changes follows the same logic.

lasa.ai builds AI agents that turn contract revision review into a same-day, structured deliverable. Whether your team reviews licensing agreements, vendor contracts, or acquisition documents, the pattern is the same — and so is the agent.

See what a revision summary looks like for your contracts.

See what this looks like for your process →Frequently Asked Questions

How long does it take to review a redlined contract manually?

What should a contract revision summary report include?

Can automation accurately classify contract changes against a negotiation playbook?

What is the difference between redlining and blacklining a contract?

How do legal teams handle hidden redlines in counterparty contracts?

See What This Looks Like for Your Process

Let's discuss how LasaAI can automate this workflow for your team.